What is AI Scraping? Definition, Benefits, Use Cases.

Adélia Cruz

Neural Network Developer

TL;DR:

- AI Scraping uses machine learning and NLP to automate data extraction, overcoming the fragility of traditional rule-based methods.

- It excels at handling unstructured data, bypassing complex anti-bot measures, and adapting to website layout changes without manual updates.

- Key benefits include 99.5% extraction accuracy, reduced maintenance costs, and the ability to transform raw web content into actionable knowledge.

- Integrating specialized tools like CapSolver is essential for solving advanced CAPTCHAs (reCAPTCHA, Cloudflare) in modern AI scraping workflows.

Introduction

The digital landscape is evolving at an unprecedented pace, and the methods we use to gather information must keep up. AI scraping represents the next generation of data collection, moving beyond simple scripts to intelligent systems that understand the web like humans do. For businesses in 2026, the ability to extract high-quality data at scale is no longer a luxury but a core competitive necessity. This article explores how AI-powered extraction is replacing traditional methods, the technical mechanics behind its success, and how you can make an AI Agent Web Scraper to stay ahead of the curve. Whether you are a data scientist or a business leader, understanding this shift is vital for navigating the future of the data economy.

What is AI Scraping?

AI scraping is the process of using artificial intelligence, specifically machine learning (ML) and natural language processing (NLP), to automatically extract data from digital sources. Unlike traditional web scraping, which relies on fixed CSS selectors or XPath expressions, AI scraping interprets the visual and textual context of a page. This allows it to identify a "price" or an "author" regardless of how the underlying HTML is structured.

The global web scraping market is projected to reach $12.34 billion by 2025, according to recent Market Growth Reports. This growth is largely driven by the demand for high-quality training data for Large Language Models (LLMs). AI scraping doesn't just collect data; it gathers knowledge by understanding relationships between entities, performing sentiment analysis, and cleaning data in real-time.

How Does AI Scraping Work?

The mechanics of AI-powered extraction involve a sophisticated multi-layer approach that mimics human browsing behavior while leveraging massive computational power.

| Layer | Functionality | Key Technologies |

|---|---|---|

| Data Acquisition | Navigates websites, handles JavaScript, and manages proxies. | Playwright, Puppeteer, Headless Chrome |

| Interpretation | Identifies relevant fields (titles, prices, reviews) using context. | LLMs (GPT-4, Claude), Computer Vision |

| Adaptability | Self-heals when layouts change by re-mapping data points. | Reinforcement Learning, Pattern Recognition |

| Security Navigation Layer | Solves security challenges like CAPTCHAs and rate limits. | CapSolver, AI-driven Browser Fingerprinting |

In a typical workflow, an AI agent receives a natural language prompt. It then navigates to the target URL, uses computer vision to "see" the page layout, and employs NLP to extract specific information. If it encounters a roadblock, it can combine AI browsers with captcha solvers to maintain a seamless flow of data.

AI Scraping vs. Traditional Web Scraping

The transition from traditional to AI-driven methods is often compared to moving from a rigid assembly line to a flexible robotic system.

Traditional scraping is built on "if-then" logic. If a developer tells the script to look for a price in a specific <div> tag, and the website owner changes that tag to a <span>, the scraper breaks. This leads to high maintenance costs and frequent downtime.

AI scraping, however, uses semantic understanding. It knows that a dollar sign followed by a number is likely a price, regardless of the HTML tag used. This resilience is why AI-driven tools are seeing a 30–40% increase in extraction speed compared to manual rule-setting, as noted in Scrapingdog's 2025 trends report.

Comparison Summary

| Feature | Traditional Web Scraping | AI Scraping |

|---|---|---|

| Logic Basis | Hard-coded rules (CSS/XPath) | Semantic & Visual Understanding |

| Maintenance | High (breaks with layout changes) | Low (self-healing capabilities) |

| Data Quality | Requires manual cleaning | Automated normalization & cleaning |

| Complexity | Struggles with dynamic/unstructured data | Excels at images, PDFs, and JS-heavy sites |

| Success Rate | Moderate (easily blocked) | High (mimics human behavior) |

Top Benefits of AI Scraping

Implementing AI into your data pipeline offers several transformative advantages that go beyond simple automation.

- Unmatched Resilience: AI scrapers can adapt to minor website updates without human intervention. This "self-healing" property ensures that your data feeds remain stable even when target sites undergo frequent redesigns.

- Handling Unstructured Data: Most of the web's valuable information is unstructured—think social media comments, forum posts, or video transcripts. AI can Master MCP (Model Context Protocol) to pipe this raw information directly into analytical tools.

- Superior Anti-Bot Bypassing: Modern websites use advanced behavioral analysis to block bots. AI scrapers can mimic human mouse movements, typing speeds, and browsing patterns. When faced with a challenge, they can integrate CAPTCHA solving in your AI scraping workflow using services like CapSolver to ensure 24/7 availability.

- Cost-Efficiency at Scale: While the initial setup of an AI system might be higher, the long-term savings in developer hours spent fixing broken scrapers are massive.

Common Use Cases for AI Scraping

AI scraping is being utilized across various industries to drive innovation and efficiency. The versatility of intelligent extraction allows organizations to tackle data challenges that were previously insurmountable.

E-commerce Intelligence and Dynamic Pricing

In the hyper-competitive world of online retail, prices change by the minute. AI scraping enables retailers to monitor competitor prices, stock levels, and customer sentiment across thousands of global storefronts in real-time. Beyond simple price tracking, AI can analyze product descriptions and images to ensure that comparisons are accurate, even when competitors use different naming conventions. This level of precision allows for dynamic pricing strategies that can significantly boost profit margins.

High-Fidelity AI Training Data

The current AI revolution is fueled by data. Gathering massive datasets to train the next generation of LLMs requires high-fidelity data that only AI-driven extraction can provide. Traditional scrapers often introduce "noise" into datasets by failing to filter out irrelevant boilerplate content. AI scrapers, however, can distinguish between the core content of an article and the surrounding advertisements or navigation links, ensuring that the training data is clean and contextually relevant.

Financial Market Analysis and Alternative Data

Hedge funds and financial institutions are increasingly turning to alternative data to gain an edge. This includes scraping news sites, regulatory filings, social media trends, and even satellite imagery data represented in tables. AI scraping can process these diverse sources simultaneously, identifying emerging market trends before they hit the mainstream. By performing real-time sentiment analysis on financial news, AI agents can provide traders with actionable insights in seconds.

Real Estate and Lead Generation

The real estate industry relies heavily on up-to-date listings from multiple platforms. AI scraping can aggregate these listings, normalize the data (e.g., converting square footage or currency), and identify undervalued properties automatically. Similarly, for B2B sales, AI can identify and qualify potential leads from professional networks and company directories by analyzing job titles, company growth patterns, and recent news mentions, creating a highly targeted sales pipeline.

Technical Implementation: Building a Resilient Pipeline

To truly leverage AI scraping, one must understand the architecture of a resilient data pipeline. It starts with choosing the right environment. Modern developers often prefer containerized solutions that can scale horizontally as the volume of target URLs increases.

The Role of Headless Browsers

Tools like Playwright and Puppeteer are the workhorses of the acquisition layer. They allow AI agents to interact with websites just like a human would—clicking buttons, scrolling through infinite feeds, and waiting for asynchronous JavaScript to load. However, running these browsers at scale is resource-intensive. AI optimization can help by determining which pages require a full browser render and which can be fetched via faster, lighter HTTP requests.

Integrating Intelligence at the Edge

The most advanced AI scraping setups perform data extraction and cleaning "at the edge." This means that instead of sending raw HTML back to a central server for processing, the AI agent performs the extraction locally. This reduces latency and bandwidth costs. By using lightweight LLMs or specialized NLP models, these agents can deliver structured JSON data directly from the browser environment.

Managing Security Challenges

As mentioned earlier, the "Security Navigation Layer" is critical. A pipeline is only as strong as its weakest link. If your AI agent is blocked by a Cloudflare challenge, the entire workflow grinds to a halt. This is why a robust integration with a service like CapSolver is non-negotiable. It provides the necessary "credentials" for your AI agent to pass through security checkpoints without triggering alarms. Best practices involve rotating user agents, managing session cookies intelligently, and using high-quality residential proxies to mask the scraper's footprint.

Overcoming Security Obstacles with CapSolver

One of the biggest hurdles in AI scraping is the increasing sophistication of anti-bot defenses. Websites now use reCAPTCHA v3, Cloudflare Turnstile, and AWS WAF to protect their data. This is where a specialized solution like CapSolver becomes indispensable. By providing an AI-powered API that solves these challenges in milliseconds, CapSolver allows your AI scrapers to focus on what they do best: extracting value. Integrating AI-LLM for CAPTCHA solving ensures that your automated agents never get stuck behind a "Verify you are human" wall.

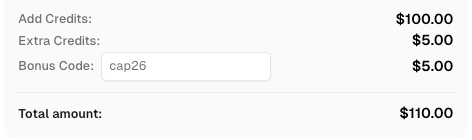

Use code

CAP26when signing up at CapSolver to receive bonus credits!

Conclusion

AI scraping is not just a trend; it is the inevitable evolution of how we interact with web data. By combining the semantic power of LLMs with the reliability of tools like CapSolver, organizations can build data pipelines that are faster, smarter, and more resilient than ever before. As we move further into 2026, the gap between those using traditional scripts and those leveraging AI will only widen. Now is the time to upgrade your infrastructure and embrace the future of intelligent data extraction.

FAQ

1. Is AI scraping legal?

Web scraping is generally legal for publicly available data, but it must comply with the website's Terms of Service and data privacy laws like GDPR. Recent rulings, such as the Meta vs. Bright Data 2024 case, emphasize the importance of respecting contractual restrictions.

2. How does AI scraping handle CAPTCHAs?

AI scrapers often integrate with third-party APIs like CapSolver, which use machine learning models to solve complex challenges like reCAPTCHA and Cloudflare Turnstile automatically.

3. Do I need to be a coder to use AI scraping?

While some technical knowledge helps, many modern AI scraping tools offer no-code or low-code interfaces where you can describe your requirements in plain English.

4. What is the main difference between a crawler and a scraper?

A crawler (like Googlebot) navigates the web to index pages, while a scraper extracts specific data points from those pages. AI enhances both by making navigation and extraction more "human-like."

5. Can AI scraping handle images and PDFs?

Yes, AI scrapers use computer vision and OCR (Optical Character Recognition) to extract text and data from non-textual formats, which traditional scrapers cannot do.

More

Web ScrapingApr 22, 2026

Rust Web Scraping Architecture for Scalable Data Extraction

Learn scalable Rust web scraping architecture with reqwest, scraper, async scraping, headless browser scraping, proxy rotation, and compliant CAPTCHA handling.

Web ScrapingApr 17, 2026

How to Scrape Job Listings Without Getting Blocked

Learn the best techniques to scrape job listings without getting blocked. Master Indeed scraping, Google Jobs API, and web scraping API with CapSolver.