Web Crawling vs. Web Scraping: The Essential Difference

Sora Fujimoto

AI Solutions Architect

TL;DR: Web crawling is the process of discovering and indexing web pages by following links, primarily used by search engines and for site audits. Web scraping is the targeted extraction of specific data from those discovered pages, essential for market research and data analysis. Both processes, especially web scraping, are frequently interrupted by advanced access controls such as AWS WAF. These challenges can be effectively addressed using a specialized solution like CapSolver.

Introduction

The digital world is built on data, and the processes of web crawling and web scraping are the fundamental mechanisms for gathering this vast information. While often used interchangeably, they represent two distinct, yet complementary, stages in the data acquisition pipeline. Understanding the difference between web crawling and web scraping is crucial for anyone building data-driven applications, conducting market research, or optimizing for search engines.

This comprehensive guide is designed for data scientists, SEO specialists, and developers. We will clearly define each process, highlight their core differences, explore 10 detailed solutions where they are applied, and demonstrate how tools like CapSolver can help improve efficiency and solve common access challenges. By the end, you will have a robust framework for implementing efficient and compliant web data strategies.

Web Crawling vs. Web Scraping: The Core Distinction

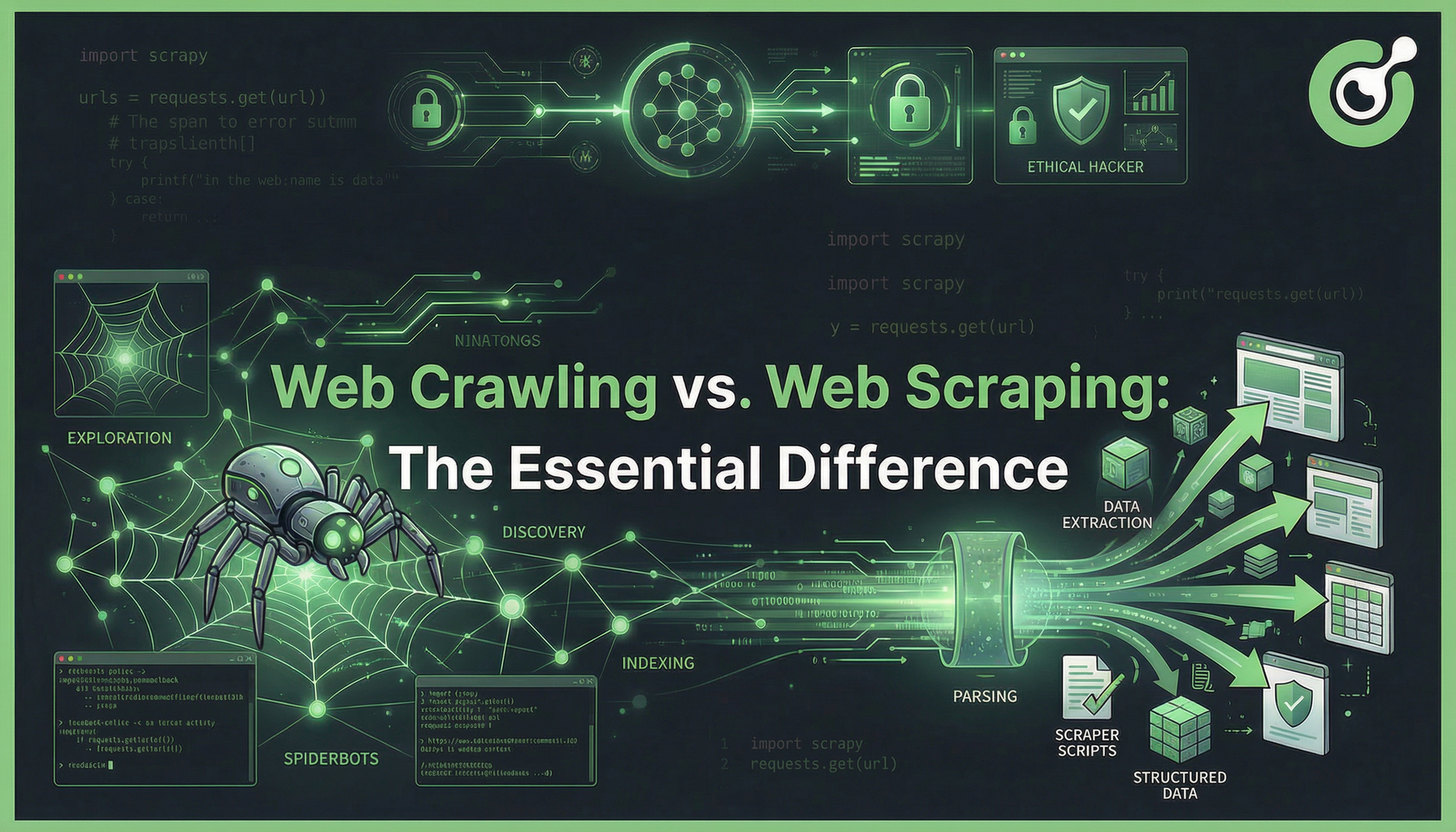

At its heart, the difference between web crawling and web scraping lies in their primary objective. Web crawling is about discovery and mapping, while web scraping is about extraction and structuring.

A web crawler, like Googlebot, systematically browses the World Wide Web, following hyperlinks from one page to another. Its goal is to build a comprehensive index of the internet. A web scraper, on the other hand, targets specific data points—such as product prices, contact information, or article text—from a known set of URLs, transforming unstructured HTML into clean, usable data formats like CSV or JSON.

Comparison Summary: Crawling vs. Scraping

| Feature | Web Crawling | Web Scraping |

|---|---|---|

| Primary Goal | Discovery, Indexing, Mapping Site Structure | Data Extraction, Structuring, Analysis |

| Output | A list of URLs, site map, or index of pages | Structured data (JSON, CSV, Database records) |

| Scope | Site-level or Web-wide (following all links) | Page-level (targeting specific elements) |

| Analogy | A librarian cataloging all books in a library | A researcher extracting a specific quote from a book |

| Key Tools | Scrapy, Apache Nutch, Googlebot | BeautifulSoup, Puppeteer, Selenium, Custom Scripts |

| Typical Use Case | Search Engine Optimization (SEO), Site Audits | Price Monitoring, Lead Generation, Market Research |

Web Crawling: The Engine of Discovery

Web crawling is the automated process of systematically browsing the World Wide Web. It is the foundational step for search engines to discover new and updated content.

Purpose and Mechanism

The primary purpose of web crawling is to create a map of the internet or a specific website. A crawler starts with a seed list of URLs, fetches the content of those pages, and then parses the HTML to find new hyperlinks. It repeats this process recursively, expanding its reach. This process is governed by rules defined in the robots.txt file, which dictates which parts of a site the crawler is allowed to access.

For SEO professionals, crawling is essential. A successful crawl means a page can be indexed and ranked. According to a study on SEO importance, successful crawling is the pivotal first step in achieving organic visibility. ClickGuard notes that if a page isn't crawled, it cannot be ranked.

Key Tools for Web Crawling

- Scrapy: A fast, high-level web crawling and web scraping framework for Python. It is ideal for large-scale, complex crawling projects.

- Apache Nutch: An open-source web crawler that can be used to build large-scale search engines.

- Custom Crawlers: Built using libraries like

requestsandBeautifulSoupin Python, often for smaller, highly customized tasks.

Web Scraping: The Art of Data Extraction

Web scraping is the technique of extracting specific data from websites. It is a more focused process that occurs after a page has been discovered by a crawler or when the URL is already known.

Purpose and Mechanism

The goal of web scraping is to convert the human-readable, unstructured data on a webpage into a machine-readable, structured format. This involves using selectors (like CSS selectors or XPath) to pinpoint the exact data elements—such as a product name, a price, or a review score—and then extracting and cleaning that text.

The market for web scraping is experiencing significant growth. The global web scraping market is projected to reach USD 2.00 billion by 2030, driven by the increasing demand for alternative data sources in finance, e-commerce, and market intelligence. Mordor Intelligence highlights this rapid expansion.

Key Tools for Web Scraping

- BeautifulSoup: A Python library for parsing HTML and XML documents, often used in conjunction with the

requestslibrary. - Selenium/Puppeteer: Browser automation tools used to scrape dynamic content (JavaScript-rendered pages) by simulating a real user's interaction.

- Dedicated Scraping APIs: Services that handle the infrastructure, proxy rotation, and anti-bot bypass for the user.

10 Detailed Solutions and Use Cases for Crawling and Scraping

Mastering both web crawling and web scraping allows for the creation of powerful, data-driven solutions. Here are 10 detailed applications, demonstrating the synergy between discovery and extraction.

1. Comprehensive SEO Site Audits (Crawling)

Goal: Identify broken links, redirect chains, and site structure issues that hinder search engine indexing.

Process: A crawler starts at the homepage and follows every internal link. It records the status code (200, 404, 301), page title, and depth for every URL.

Value: Ensures that all important pages are discoverable by search engine crawlers, which is fundamental for good SEO.

2. Real-Time E-commerce Price Monitoring (Scraping)

Goal: Track competitor pricing for thousands of products to adjust dynamic pricing strategies.

Process: A scraper is given a list of known product URLs. It targets the specific HTML element containing the price and extracts the numerical value.

Value: Provides a competitive edge by allowing instant reaction to market changes.

3. Lead Generation and Contact Data Acquisition (Scraping)

Goal: Extract contact details (email, phone number) from business directories or professional networking sites.

Process: A crawler first discovers relevant company profile pages. The scraper then extracts the specific text patterns corresponding to email addresses and phone numbers from those pages.

Value: Fuels sales and marketing pipelines with fresh, targeted contact information.

4. Search Engine Indexing and Ranking (Crawling)

Goal: The core function of major search engines like Google and Bing.

Process: Massive, distributed crawlers continuously discover new content. The discovered pages are then passed to an indexer, which processes the text and stores it in a massive database for quick retrieval.

Value: Makes the vastness of the internet searchable for billions of users.

5. Content Aggregation and News Feeds (Crawling & Scraping)

Goal: Create a centralized platform that pulls articles from multiple news sources.

Process: A crawler monitors the sitemaps and category pages of target news sites. When a new article URL is found, a scraper extracts the article title, body text, author, and publication date.

Value: Powers personalized news readers and content analysis platforms.

6. Market Research and Sentiment Analysis (Scraping)

Goal: Gather customer reviews and comments from forums, social media, and e-commerce sites to gauge public opinion on a product.

Process: Scrapers target the review sections of product pages, extracting the text and star ratings. This data is then fed into Natural Language Processing (NLP) models for sentiment scoring.

Value: Provides actionable insights into product strengths and weaknesses directly from the consumer voice.

7. Website Change Detection (Crawling & Scraping)

Goal: Monitor a specific set of pages for unauthorized changes, regulatory updates, or stock availability.

Process: A crawler visits the target URL on a schedule. A scraper extracts a hash of the page's core content. If the hash changes, an alert is triggered.

Value: Essential for compliance, competitive intelligence, and inventory tracking.

8. Academic Research and Citation Mapping (Crawling)

Goal: Map the citation network within a field of study.

Process: A crawler starts with a key paper and extracts all the references and all the papers that cite it. It follows these links to build a graph of academic influence.

Value: Helps researchers identify key authors and emerging trends in their domain.

9. Data Migration and Archiving (Scraping)

Goal: Extract all content from an old website before decommissioning it, or archive a site for historical purposes.

Process: A crawler identifies all URLs on the old site. A scraper systematically extracts the full HTML content of each page and saves it locally.

Value: Preserves valuable data and content during platform transitions.

10. Training Machine Learning Models (Scraping)

Goal: Acquire large, diverse datasets for training AI models, such as image recognition or language models.

Process: Scrapers are deployed to gather millions of images with their captions, or vast amounts of text data from various sources.

Value: Provides the necessary fuel for developing and refining cutting-edge AI technologies.

The Challenge: Modern Access Controls and AWS WAF

As the value of web data continues to rise, websites have adopted increasingly advanced security and traffic filtering mechanisms. These include rate limiting, IP reputation checks, and CAPTCHA verification, often enforced through Web Application Firewalls (WAFs) such as AWS WAF. Under these controls, both large-scale crawling and selective data extraction can be flagged as abnormal behavior, resulting in blocked requests or disrupted data pipelines.

When traffic is suspected to be automated, AWS WAF may issue a CAPTCHA challenge, stopping the workflow until it is resolved. In such scenarios, dedicated solutions become essential to maintain continuity and data reliability.

CapSolver: The Essential Tool for Uninterrupted Data Acquisition

CapSolver is an AI-powered CAPTCHA and anti-bot bypass service that ensures your web crawling and web scraping operations remain seamless and efficient, even against the toughest defenses like AWS WAF. It acts as a critical layer, solving challenges in real-time so your crawlers and scrapers can proceed without interruption.

CapSolver offers both token-based and recognition-based solutions for AWS WAF, allowing developers to integrate it directly into their existing Python or Node.js scraping frameworks.

Redeem Your CapSolver Bonus Code

Boost your automation budget instantly!

Use bonus code CAPN when topping up your CapSolver account to get an extra 5% bonus on every recharge — with no limits.

Redeem it now in your CapSolver Dashboard

.

Integrating CapSolver for AWS WAF Bypass

To demonstrate the power of CapSolver, here is how you would integrate it to solve an AWS WAF challenge using both the recognition and token modes.

Solution 1: AWS WAF Recognition Mode (Image-Based CAPTCHA)

This mode is used when the WAF presents a visual challenge (e.g., "Select all images with a car").

Code Operation Steps (Python Example):

- Capture: Your scraper detects the AWS WAF image challenge and captures the image.

- Submit: Send the image to the CapSolver API using the

AwsWafClassificationtask type. - Receive: CapSolver's AI returns the coordinates or labels of the correct objects.

- Solve: Your scraper uses the coordinates to simulate the correct clicks on the challenge page.

CapSolver Task (Recognition Mode) Reference:

For detailed API parameters and implementation, refer to the official documentation: CapSolver AWS WAF Classification

Solution 2: AWS WAF Token Mode (Invisible/Challenge Page)

This mode is used when the WAF requires a valid token to proceed, often after a brief loading screen or an invisible check.

Code Operation Steps (Python Example):

- Identify: Your scraper identifies the necessary parameters from the challenge page (e.g.,

host,iv,key,context). - Submit: Send these parameters to the CapSolver API using the

AwsWaftask type. - Receive: CapSolver solves the challenge and returns a valid

token. - Bypass: Your scraper injects the received token into the subsequent request headers or form data to bypass the WAF.

CapSolver Task (Token Mode) Reference:

For detailed API parameters and implementation, refer to the official documentation: CapSolver AWS WAF Token

Conclusion and Call to Action

The distinction between web crawling and web scraping is clear: crawling is the map, and scraping is the treasure. Both are indispensable for modern data strategies, whether you are an SEO professional ensuring discoverability or a data scientist building a market intelligence platform.

However, the increasing use of sophisticated anti-bot systems like AWS WAF means that even the most well-designed crawling and scraping pipelines can fail. To maintain high uptime and data accuracy, you need a reliable solution.

CapSolver provides the essential layer of defense against these challenges, ensuring your data flow remains uninterrupted. Stop wasting time on manual CAPTCHA solving or dealing with IP bans.

Ready to build a robust, uninterrupted data pipeline?

- Start your journey: Visit the CapSolver website to explore all their anti-bot solutions.

- Get started immediately: Sign up for your free trial on the CapSolver Dashboard.

CapSolver Official Website

CapSolver Dashboard

Frequently Asked Questions (FAQ)

Q1: Is web crawling or web scraping illegal?

Neither web crawling nor web scraping is inherently illegal. Legality depends on what you scrape and how you do it. You must respect the robots.txt file, avoid scraping private or copyrighted data, and adhere to terms of service. Excessive, aggressive crawling that harms a website's performance can be considered illegal or unethical.

Q2: Can I do web scraping without web crawling?

Yes, you can. If you already have a list of specific URLs (e.g., a list of product pages from a database), you can perform web scraping directly on those pages without needing to first web crawl the entire site to discover them. Crawling is only necessary for discovery.

Q3: How does CapSolver help with both web crawling and web scraping?

CapSolver plays a key role in resolving access challenges that interrupt both workflows. In large-scale crawling, heavy request volume may trigger CAPTCHA verification due to rate limiting. In targeted scraping, a single extraction attempt might activate an AWS WAF challenge. CapSolver processes these verifications in real time, enabling smooth continuation of both the discovery phase (crawling) and the extraction phase (scraping) without disruption.

Q4: What is the main difference between a simple scraper and a full-fledged crawler framework like Scrapy?

A simple scraper (e.g., using requests and BeautifulSoup) is typically a single script designed to extract data from a single page or a small, known list of URLs. A full-fledged crawler framework like Scrapy is designed for large-scale, distributed web crawling. It handles link discovery, request scheduling, retries, proxy rotation, and pipeline management, making it suitable for mapping entire websites.

Q5: Is web crawling only for search engines?

No. While search engines are the most famous users of web crawling, it is also used by SEO tools for site audits, by academic researchers to map citation networks, and by content aggregators to discover new articles. Any task requiring the systematic discovery of links and pages benefits from web crawling.

More

Web ScrapingApr 22, 2026

Rust Web Scraping Architecture for Scalable Data Extraction

Learn scalable Rust web scraping architecture with reqwest, scraper, async scraping, headless browser scraping, proxy rotation, and compliant CAPTCHA handling.

Web ScrapingApr 17, 2026

How to Scrape Job Listings Without Getting Blocked

Learn the best techniques to scrape job listings without getting blocked. Master Indeed scraping, Google Jobs API, and web scraping API with CapSolver.