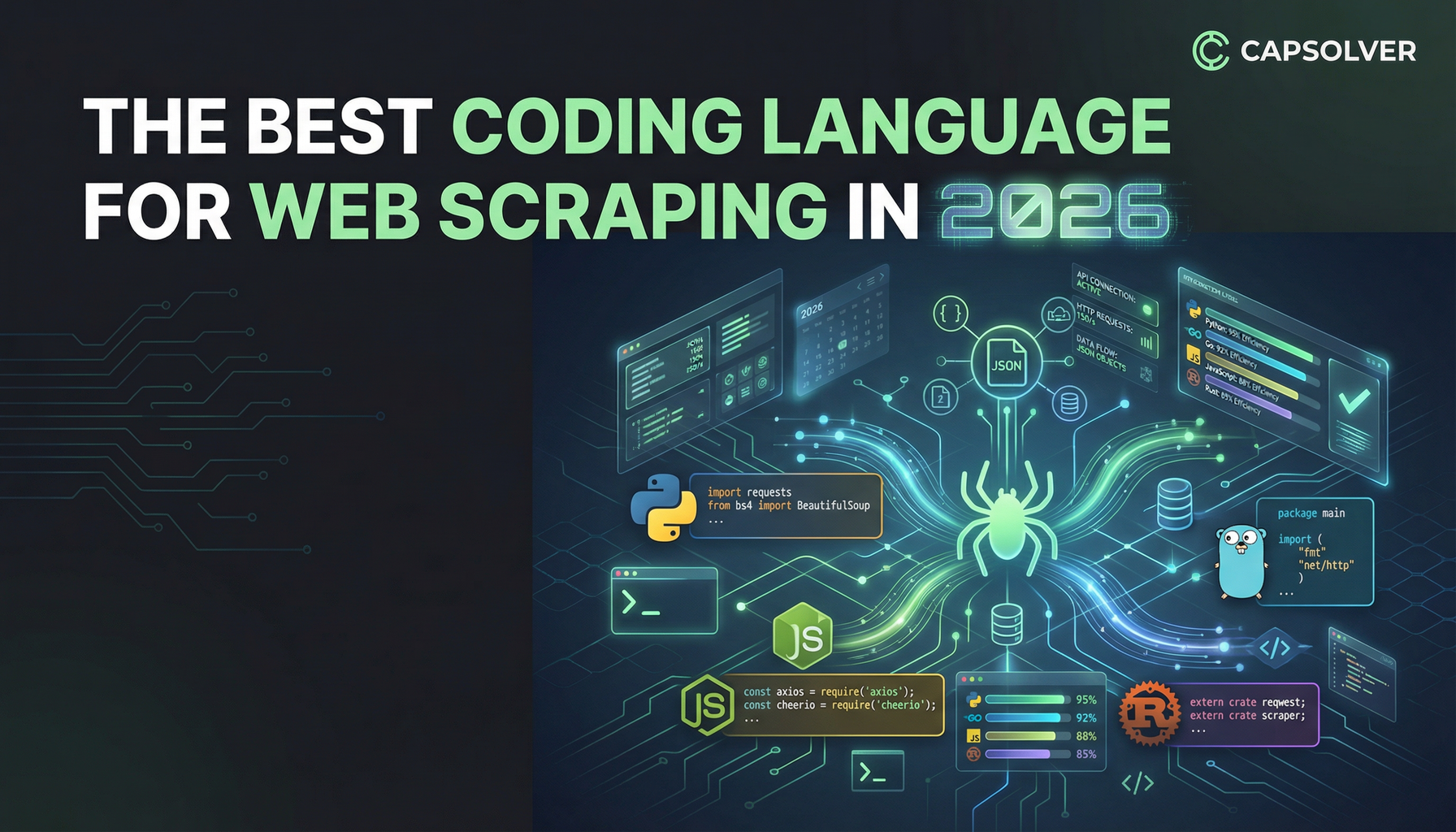

The Best Coding Language for Web Scraping in 2026

Sora Fujimoto

AI Solutions Architect

Tl;DR

- Python remains the most versatile and beginner-friendly coding language for web scraping due to its rich ecosystem of libraries like Scrapy and BeautifulSoup.

- For high-volume, performance-critical web scraping operations, compiled languages like Go and Rust offer superior speed and concurrency, making them the top choices for large-scale data extraction in 2026.

- JavaScript (Node.js) is essential for scraping modern, dynamic websites built with Single-Page Application (SPA) frameworks, as it handles client-side rendering natively.

- The choice of coding language is secondary to overcoming anti-bot measures; tools like CapSolver are necessary to ensure the reliability of any web scraping project.

Introduction

Choosing the right coding language is the foundational decision for any successful web scraping project. The "best" language is not a universal constant; it is a dynamic variable that depends entirely on the project's specific requirements, such as scale, speed, and the complexity of the target websites. This comprehensive guide is designed for developers, data scientists, and business analysts who are planning or scaling their data extraction efforts in 2026. We will analyze the strengths and weaknesses of the top programming languages, helping you select the optimal tool for your unique web scraping challenges. By understanding the modern landscape, you can build a more efficient and robust data pipeline.

The Top Contenders: A Deep Dive into 6+ Languages

The evolution of the web, with its increasing reliance on JavaScript and sophisticated anti-bot defenses, has changed the demands placed on a coding language used for web scraping. While some languages excel in rapid development, others dominate in raw performance and concurrency. Here, we explore the leading options for data extraction in 2026.

Python: The King of Data Extraction

Python has held the top spot in the web scraping community for over a decade, and its dominance continues into 2026. Its clear, readable syntax significantly reduces development time, making it the ideal coding language for rapid prototyping and small-to-medium projects. The extensive library ecosystem is Python's greatest asset, providing specialized tools for every stage of the scraping process. Libraries like Scrapy offer a complete framework for large-scale projects, while BeautifulSoup is perfect for simple HTML parsing.

Pros for Web Scraping:

- Vast Ecosystem: Unmatched collection of libraries (Scrapy, BeautifulSoup, Requests, Selenium).

- Ease of Use: Simple syntax and a gentle learning curve for new developers.

- Community Support: A massive, active community provides constant updates and solutions.

Cons for Web Scraping:

- Performance Bottleneck: The Global Interpreter Lock (GIL) limits true parallel execution, which can slow down high-volume, concurrent requests.

- Memory Usage: Python processes can be memory-intensive compared to compiled languages.

Best Use Case: Rapid development, data analysis workflows, and projects where development speed is prioritized over raw execution speed.

JavaScript (Node.js): Essential for Dynamic Content

The modern web is built on JavaScript, making Node.js an increasingly vital coding language for web scraping. Node.js allows developers to run JavaScript on the server side, which is crucial for interacting with websites that heavily rely on client-side rendering (SPAs). Tools like Puppeteer and Playwright provide powerful, high-level APIs to control headless browsers, effectively mimicking a real user's interaction with the page. This capability is non-negotiable when dealing with complex, dynamic content.

Pros for Web Scraping:

- Native Dynamic Handling: Directly executes client-side JavaScript, solving the rendering problem.

- Asynchronous I/O: Node.js is inherently non-blocking, making it highly efficient for concurrent network requests.

- Unified Stack: Developers can use a single coding language for both frontend and backend tasks.

Cons for Web Scraping:

- Resource Overhead: Headless browser usage consumes significantly more CPU and memory than simple HTTP requests.

- Library Maturity: While growing, the dedicated scraping library ecosystem is less mature than Python's.

Best Use Case: Scraping Single-Page Applications (SPAs), sites with heavy AJAX loading, and projects requiring complex user interaction simulation.

Go (Golang): The Speed and Concurrency Champion

Go, developed by Google, is the preferred coding language for performance-critical infrastructure, and its benefits translate directly to large-scale web scraping. Go's built-in concurrency model, based on goroutines, allows developers to manage thousands of simultaneous requests with minimal overhead. This makes it significantly faster and more resource-efficient than Python for high-volume tasks. When raw speed and efficient resource utilization are paramount, Go is the clear winner in 2026.

Pros for Web Scraping:

- Superior Concurrency: Goroutines enable highly efficient, lightweight parallel processing.

- Blazing Fast: Compiled language performance drastically reduces execution time.

- Low Memory Footprint: Excellent for running many scrapers on limited server resources.

Cons for Web Scraping:

- Fewer High-Level Libraries: Requires more manual coding for tasks like HTML parsing compared to Python.

- Verbosity: More verbose than Python, leading to slightly longer development cycles.

Best Use Case: Massive-scale web scraping projects, real-time data feeds, and systems where cost-efficiency of cloud resources is a key metric.

Java: The Enterprise Workhorse

Java is a robust, mature coding language that excels in building large, stable, and long-running enterprise applications. While it may not be the first choice for a quick, one-off web scraping script, its stability and extensive tooling make it suitable for complex, mission-critical data pipelines. Libraries like Jsoup and Apache HttpClient provide reliable tools for data extraction. Java's strong typing and mature garbage collection contribute to the reliability of large-scale systems.

Pros for Web Scraping:

- Stability and Scalability: Excellent for building highly stable, distributed scraping systems.

- Mature Ecosystem: Robust tooling and enterprise-level support.

Cons for Web Scraping:

- Development Speed: More verbose and slower to write than Python or Go.

- Performance: Generally slower than Go, though faster than standard Python for CPU-bound tasks.

Best Use Case: Enterprise-level data aggregation, financial data extraction, and projects requiring high stability and long-term maintenance.

Ruby: The Developer-Friendly Choice

Ruby, with its focus on developer happiness and elegant syntax, is a strong contender for smaller, more manageable web scraping tasks. The community provides excellent tools like Mechanize for stateful navigation and Nokogiri for efficient HTML parsing. While its performance is comparable to Python, Ruby's smaller community means fewer specialized web scraping libraries compared to the Python ecosystem. It remains a viable coding language for developers already comfortable with the Ruby environment.

Pros for Web Scraping:

- Elegant Syntax: Highly readable and enjoyable to write, leading to faster initial development.

- Mechanize: Excellent library for simulating user sessions and form submissions.

Cons for Web Scraping:

- Smaller Community: Fewer specialized libraries and less widespread adoption for large-scale scraping.

- Performance: Not the fastest option for highly concurrent operations.

Best Use Case: Simple, quick-to-deploy scrapers, and projects within existing Ruby-based infrastructure.

Rust: The Future of High-Performance Scraping

Rust is a modern coding language that is quickly gaining traction for its unparalleled performance and memory safety. It is consistently ranked as one of the most admired languages by developers. For web scraping, Rust offers the speed of C++ without the common memory-related bugs. Its asynchronous capabilities, powered by Tokio, make it a powerful choice for building ultra-fast, concurrent scrapers that can handle massive volumes of requests efficiently.

Pros for Web Scraping:

- Extreme Performance: Near C/C++ speed with zero-cost abstractions.

- Memory Safety: Eliminates entire classes of bugs common in other languages.

- Concurrency: Excellent asynchronous framework for high-throughput web scraping.

Cons for Web Scraping:

- Steep Learning Curve: The focus on ownership and borrowing can be challenging for newcomers.

- Limited Ecosystem: The high-level scraping library ecosystem is still nascent compared to Python.

Best Use Case: Cutting-edge, ultra-high-performance web scraping systems where speed, resource efficiency, and reliability are the absolute highest priorities.

Comparison Summary: Choosing Your Weapon

The decision of which coding language to use for web scraping often comes down to a trade-off between development speed and execution speed. The table below summarizes the key differences between the top contenders.

| Language | Ease of Use | Performance/Speed | Library Ecosystem | Dynamic Content | Concurrency Model |

|---|---|---|---|---|---|

| Python | ★★★★★ | ★★★☆☆ | ★★★★★ | ★★★☆☆ | Threading/Multiprocessing |

| JavaScript (Node.js) | ★★★★☆ | ★★★★☆ | ★★★☆☆ | ★★★★★ | Event Loop (Non-blocking I/O) |

| Go (Golang) | ★★★☆☆ | ★★★★★ | ★★★☆☆ | ★★☆☆☆ | Goroutines (Lightweight Threads) |

| Java | ★★★☆☆ | ★★★★☆ | ★★★★☆ | ★★☆☆☆ | Traditional Threads |

| Ruby | ★★★★☆ | ★★★☆☆ | ★★★☆☆ | ★★☆☆☆ | Traditional Threads |

| Rust | ★★☆☆☆ | ★★★★★ | ★★☆☆☆ | ★★☆☆☆ | Tokio (Asynchronous Runtime) |

Note: Ratings are relative to the specific context of web scraping.

Real-World Application Scenarios

The best way to illustrate the choice of coding language is through practical examples. Different projects demand different tools.

Scenario 1: E-commerce Price Monitoring (Python)

A small business needs to track the prices of 500 products across five competitor websites daily. The data volume is low, and the primary goal is to integrate the scraped data quickly into an existing spreadsheet or database.

- Why Python? Python is the ideal coding language here. The speed of development using libraries like Requests and BeautifulSoup allows the developer to set up the monitoring script in hours, not days. The ease of integrating Python with data analysis tools like Pandas makes post-scraping processing simple. This is a classic case where development time outweighs the need for micro-optimization of execution speed.

Scenario 2: Large-Scale News Aggregation (Go/Rust)

A media company needs to scrape millions of news articles per day from thousands of sources globally, requiring high throughput and minimal latency. The system must run 24/7 on a cluster of cloud servers.

- Why Go or Rust? This is a performance-critical task. Go's superior concurrency and low resource consumption make it perfect for managing millions of simultaneous network connections efficiently. Rust is an even stronger choice if the team can handle the initial learning curve, offering maximum speed and reliability for a system that cannot afford to fail. The efficiency of these compiled languages directly translates to lower cloud computing costs for the company.

Scenario 3: Single-Page Application (SPA) Data Extraction (JavaScript/Node.js)

A market research firm needs to extract user-generated content from a modern social media platform built entirely with React. The required data only appears after complex client-side JavaScript executes.

- Why JavaScript (Node.js)? Because the target site is a dynamic SPA, a traditional HTTP client will only receive a blank HTML shell. Node.js, paired with a headless browser like Playwright, is the only practical coding language solution. It can fully render the page, execute all necessary JavaScript, and then extract the final, loaded content. This capability is essential for modern web scraping against complex web applications.

The Unavoidable Challenge: Anti-Scraping Measures

Regardless of the coding language you choose—be it Python, Go, or JavaScript—your web scraping operation will inevitably encounter sophisticated defenses. Websites employ various techniques to protect their data, including IP rate limiting, browser fingerprinting, and complex CAPTCHA challenges. These measures can halt even the most perfectly written scraper, rendering your choice of coding language irrelevant if the requests are blocked.

To maintain a reliable and consistent data flow, developers must integrate specialized tools that handle these challenges automatically. This is where a dedicated service becomes indispensable for any serious web scraping effort.

Recommended Tool: CapSolver

To ensure your chosen coding language can consistently deliver data, we recommend integrating CapSolver into your workflow. CapSolver is a powerful service designed to handle the most challenging anti-bot systems, including various forms of CAPTCHAs and advanced detection mechanisms.

By offloading the complexity of challenge resolution to CapSolver, your development team can focus on the core logic of the web scraping process. This integration ensures that your scrapers, regardless of whether they are written in Python or Go, maintain high uptime and data integrity. CapSolver acts as a crucial layer of reliability, allowing your scraper to proceed as if no challenge were present.

We encourage you to explore the capabilities of CapSolver to see how it can enhance the stability of your data extraction pipelines. You can get started on the CapSolver Homepage and view your usage statistics on the CapSolver dashboard.

Redeem Your CapSolver Bonus Code

Boost your automation budget instantly!

Use bonus code CAPN when topping up your CapSolver account to get an extra 5% bonus on every recharge — with no limits.

Redeem it now in your CapSolver Dashboard

.

Conclusion and Call to Action

The best coding language for web scraping in 2026 is the one that aligns with your project's goals. Python remains the most accessible and versatile choice for the majority of projects. However, for those focused on extreme scale and performance, Go and Rust are the future. JavaScript (Node.js) is a necessity for navigating the dynamic web.

Ultimately, the success of your web scraping project hinges not just on the language, but on your ability to overcome obstacles. A robust web scraping solution requires a multi-faceted approach that includes a well-chosen coding language and a reliable challenge-solving service. Don't let anti-bot measures derail your data collection efforts.

Take the next step in building a resilient data pipeline. Start your web scraping project today and ensure its success by integrating CapSolver for reliable challenge resolution.

Frequently Asked Questions (FAQ)

Q1: Is Python still the best language for web scraping in 2026?

Yes, Python is still the best all-around coding language for web scraping in 2026. Its extensive, mature library ecosystem (Scrapy, BeautifulSoup) and ease of use make it the default choice for most developers. While compiled languages like Go and Rust are faster, Python's rapid development cycle and community support keep it at the top for general-purpose data extraction.

Q2: Should I use a headless browser or an HTTP client for web scraping?

The choice depends on the target website. An HTTP client (like Python's Requests or Go's standard library) is faster and more resource-efficient, and should be used whenever possible. However, if the website is a modern Single-Page Application (SPA) that loads content via JavaScript, you must use a headless browser (like Puppeteer or Playwright) to render the page before extracting the data.

Q3: How does CapSolver help with web scraping?

CapSolver provides a crucial service by automatically handling various challenges, such as CAPTCHAs, that often block web scraping operations. By integrating CapSolver into your scraper, you ensure that your data extraction process remains uninterrupted, regardless of the coding language you use. This significantly improves the reliability and uptime of your scraping pipeline.

Q4: Which language is the fastest for web scraping?

Go (Golang) and Rust are the fastest languages for web scraping. As compiled languages, they offer superior execution speed and highly efficient concurrency models (goroutines in Go, Tokio in Rust). This makes them significantly faster than interpreted languages like Python or Ruby for high-volume, concurrent network requests.

More

Web ScrapingApr 22, 2026

Rust Web Scraping Architecture for Scalable Data Extraction

Learn scalable Rust web scraping architecture with reqwest, scraper, async scraping, headless browser scraping, proxy rotation, and compliant CAPTCHA handling.

Web ScrapingApr 17, 2026

How to Scrape Job Listings Without Getting Blocked

Learn the best techniques to scrape job listings without getting blocked. Master Indeed scraping, Google Jobs API, and web scraping API with CapSolver.