How to Solve Visual Puzzles in n8n with CapSolver

Ethan Collins

Pattern Recognition Specialist

Visual puzzles are everywhere: slider CAPTCHAs that require dragging a piece to the correct position, rotation challenges where you align an image, object-selection grids, and animated GIF text recognition. These are not traditional text-based CAPTCHAs and they are not the token-based challenges (like reCAPTCHA or Turnstile) that return a string you submit with a form. They are image-based visual challenges where the input is a picture and the output is a measurement — a distance in pixels, an angle in degrees, a set of coordinates, or recognized text.

That is what CapSolver's Vision Engine solves. It uses AI to analyze the visual puzzle image and return the precise answer your automation needs to proceed.

In this guide, you will learn how to use the Vision Engine in n8n through the CapSolver community node. The walkthrough covers the core Solver API workflow and a practical Slider Puzzle Solver that fetches puzzle images, converts them to base64, solves the slider, and returns the distance in pixels.

Important: Vision Engine is a Recognition operation, not a Token operation. That means the result comes back instantly in a single API call — there is no polling, no

getTaskResultloop, and no timeout waiting. You send the image, you get the answer.

How Vision Engine Differs from Other CapSolver Operations

Most CapSolver operations in n8n are Token tasks. You submit site parameters (URL, site key, proxy), CapSolver solves the challenge in the background, and your workflow polls for the result. The output is a token string that you then submit to the target site.

Vision Engine works differently:

| Aspect | Token Operations (reCAPTCHA, Turnstile, etc.) | Vision Engine (Recognition) |

|---|---|---|

| Resource | Token | Recognition |

| Input | Website URL, site key, proxy | Base64 image(s), module name |

| Processing | Async — poll for result | Instant — single API call |

| Output | Token string | Pixels, degrees, coordinates, or text |

| Proxy | Often required | Not needed |

| Use case | Submit token to bypass challenge gate | Interpret visual puzzle to automate interaction |

Vision Engine is closer to Image To Text (OCR) than to reCAPTCHA solving, but it goes beyond simple text recognition. Where Image To Text reads characters from a static image, Vision Engine understands spatial relationships — it can calculate how far to drag a slider piece, what angle to rotate an image, which areas of a picture match a question, or what text is hidden in an animated GIF.

Available Modules

Vision Engine supports multiple AI models, each designed for a specific type of visual puzzle:

| Module | Purpose | Input | Returns |

|---|---|---|---|

slider_1 |

Slider puzzle solving | image (puzzle piece) + imageBackground (background with slot) |

Distance in pixels |

rotate_1 |

Single image rotation | image + imageBackground |

Angle in degrees |

rotate_2 |

Multi-image rotation (inner + outer) | image (inner image) |

Angle in degrees |

shein |

Object/area selection | image + question (what to select) |

rects array — bounding boxes [{x1, y1, x2, y2}] |

ocr_gif |

Animated GIF text recognition | image (base64 of the GIF) |

Recognized text string |

When to Use Each Module

slider_1 — The most common visual CAPTCHA type. The user sees a background image with a missing piece and a separate puzzle piece. The goal is to determine how many pixels to the right the piece must be dragged. Both image (the puzzle piece) and imageBackground (the full background with the slot) are required.

rotate_1 — A single image that must be rotated to the correct orientation. Both image and imageBackground are required. The engine returns the angle in degrees.

rotate_2 — Two concentric images (an inner image and an outer ring). The inner image must be rotated to align with the outer. Only image is needed. The engine returns the angle.

shein — Used for challenges that ask "select the matching items" or "tap the correct area." Requires image plus a question parameter describing what to find. Returns bounding-box coordinates for each matching area.

ocr_gif — Animated GIFs where text flashes across frames, making it unreadable for standard OCR. The engine analyzes the animation and extracts the text.

Prerequisites

Before you start, make sure you have:

- An n8n instance (self-hosted or cloud)

- A CapSolver account with API key and balance — sign up here

- The CapSolver community node installed in n8n (

n8n-nodes-capsolver) - A configured CapSolver credential in n8n (Settings > Credentials > CapSolver API)

No proxy is required for Vision Engine tasks.

CapSolver Node Settings for Vision Engine

In the n8n CapSolver node, configure these settings:

| Setting | Value |

|---|---|

| Resource | Recognition |

| Operation | Vision Engine |

| module | The model name (e.g., slider_1, rotate_1, ocr_gif) |

| image | Base64-encoded image string (no data:image/...;base64, prefix) |

| imageBackground | Base64-encoded background image (optional — required for slider_1 and rotate_1) |

| question | Text question (optional — required only for shein module) |

| websiteURL | Source page URL (optional — can improve accuracy) |

The type field is automatically set to VisionEngine when you select the Vision Engine operation.

Base64 Image Requirements

The image and imageBackground fields must be raw base64 strings — no data URI prefix, no newlines:

- Correct:

/9j/4AAQSkZJRgABA...(raw base64) - Wrong:

data:image/jpeg;base64,/9j/4AAQSkZJRgABA...(has prefix)

If your source image is a URL, you must fetch it first and convert it to base64. If it already has the data:image/...;base64, prefix, strip it before passing it to the CapSolver node.

Workflow 1: Vision Engine — Solver API

This workflow exposes Vision Engine as a simple REST API endpoint. Send a POST request with the module name and base64 image(s), and get the solution back as JSON.

Node Flow

Receive Solver Request (Webhook POST)

→ Validate Input (Code)

→ Solve Visual Puzzle (CapSolver — Recognition — Vision Engine)

→ Vision Engine Error? (IF)

→ true: Respond to Webhook Error

→ false: Respond to Webhook (Success)How It Works

1. Receive Solver Request

A webhook endpoint accepts POST requests with a JSON body containing:

json

{

"module": "slider_1",

"image": "/9j/4AAQSkZJRgABA...",

"imageBackground": "/9j/4AAQSkZJRgABA...",

"question": "",

"websiteURL": ""

}2. Validate Input

The Code node checks that image exists and that module is one of the supported values (slider_1, rotate_1, rotate_2, shein, ocr_gif). If validation fails, it sets an error field.

3. Solve Visual Puzzle

The CapSolver node is configured with:

- Resource:

Recognition - Operation:

Vision Engine - module: from the request body

- image: from the request body

- imageBackground: from the request body (empty string if not provided)

- question: from the request body (empty string if not provided)

Since this is a Recognition task, the result returns instantly.

4. Error Handling

The IF node checks for errors. If the CapSolver node returned an error (wrong module, invalid image, etc.), the error response webhook fires. Otherwise, the success response returns the solution.

Expected Request and Response

Slider puzzle request:

bash

curl -X POST https://your-n8n-instance.com/webhook/vision-engine-solver \

-H "Content-Type: application/json" \

-d '{

"module": "slider_1",

"image": "BASE64_PUZZLE_PIECE",

"imageBackground": "BASE64_BACKGROUND"

}'Success response:

json

{

"solution": {

"distance": 142,

"module": "slider_1"

}

}GIF OCR request:

bash

curl -X POST https://your-n8n-instance.com/webhook/vision-engine-solver \

-H "Content-Type: application/json" \

-d '{

"module": "ocr_gif",

"image": "BASE64_GIF_DATA"

}'Success response:

json

{

"solution": {

"text": "x7Km9",

"module": "ocr_gif"

}

}Import This Workflow

Click to expand workflow JSON

json

{

"nodes": [

{

"parameters": {

"content": "## Vision Engine \u2014 Solver API\n\n### How it works\n\n1. Receives a vision processing request through a webhook.\n2. Validates the input from the request.\n3. Processes the request using the Vision Engine Solver API.\n4. Checks if an error occurred during processing.\n5. Sends a success response if processing was successful.\n6. Sends an error response if processing failed.\n\n### Setup steps\n\n- [ ] Set up a webhook URL to receive requests for the 'Receive Vision Request' node.\n- [ ] Configure the 'Validate Input' node with any required input validation logic.\n- [ ] Configure the 'Solve Vision Engine' node with appropriate API keys or access credentials.\n- [ ] Define conditions in the 'Error?' node for assessing processing outcomes.\n",

"height": 624,

"width": 480

},

"type": "n8n-nodes-base.stickyNote",

"typeVersion": 1,

"position": [

-992,

-368

],

"id": "f0d88aeb-a5cd-4f57-8d08-05b388ec38c3",

"name": "Sticky Note"

},

{

"parameters": {

"content": "## Receive and validate input\n\nHandles incoming requests and validates them before processing.",

"height": 304,

"width": 496,

"color": 7

},

"type": "n8n-nodes-base.stickyNote",

"typeVersion": 1,

"position": [

-432,

-208

],

"id": "03c30168-9556-4ff3-ae53-c236685f9fef",

"name": "Sticky Note1"

},

{

"parameters": {

"content": "## Vision engine processing\n\nProcesses the validated request through the Vision Engine solver.",

"height": 352,

"color": 7

},

"type": "n8n-nodes-base.stickyNote",

"typeVersion": 1,

"position": [

288,

-272

],

"id": "21189068-5424-4697-8618-f9689eed2b15",

"name": "Sticky Note2"

},

{

"parameters": {

"content": "## Handle response\n\nEvaluates the result and responds with either success or error.",

"height": 528,

"width": 512,

"color": 7

},

"type": "n8n-nodes-base.stickyNote",

"typeVersion": 1,

"position": [

640,

-368

],

"id": "8aa644e9-135c-4381-b47b-f20acf83dd4f",

"name": "Sticky Note3"

},

{

"parameters": {

"httpMethod": "POST",

"path": "vision-engine-solver",

"responseMode": "responseNode",

"options": {}

},

"id": "ve-api-001",

"name": "Receive Vision Request",

"type": "n8n-nodes-base.webhook",

"typeVersion": 2.1,

"position": [

-384,

-80

],

"webhookId": "27e68e0b-9e2a-4c8d-ac43-92cda6e512e2",

"onError": "continueRegularOutput"

},

{

"parameters": {

"jsCode": "const body = $input.first().json.body || {};\nconst validModules = ['slider_1', 'rotate_1', 'rotate_2', 'shein', 'ocr_gif'];\nconst module = (body.module || '').trim();\nconst image = (body.image || '').trim();\nconst imageBackground = (body.imageBackground || '').trim();\nconst question = (body.question || '').trim();\nconst websiteURL = (body.websiteURL || '').trim();\nif (!image) return [{ json: { error: 'Missing required field: image (base64 encoded)' } }];\nif (!module) return [{ json: { error: 'Missing required field: module' } }];\nif (!validModules.includes(module)) return [{ json: { error: `Invalid module: ${module}. Must be one of: ${validModules.join(', ')}` } }];\nif ((module === 'slider_1' || module === 'rotate_2') && !imageBackground) return [{ json: { error: `Module ${module} requires imageBackground` } }];\nif (module === 'shein' && !question) return [{ json: { error: 'Module shein requires a question parameter' } }];\nreturn [{ json: { module, image, imageBackground, question, websiteURL, validated: true } }];"

},

"id": "ve-api-002",

"name": "Validate Input",

"type": "n8n-nodes-base.code",

"typeVersion": 2,

"position": [

-80,

-80

]

},

{

"parameters": {

"resource": "Recognition",

"operation": "Vision Engine",

"websiteURL": "={{ $json.websiteURL }}",

"module": "={{ $json.module }}",

"image": "={{ $json.image }}",

"imageBackground": "={{ $json.imageBackground }}",

"question": "={{ $json.question }}",

"optional": {}

},

"id": "ve-api-003",

"name": "Solve Vision Engine",

"type": "n8n-nodes-capsolver.capSolver",

"typeVersion": 1,

"position": [

336,

-80

],

"credentials": {

"capSolverApi": {

"id": "BeBFMAsySMsMGeE9",

"name": "CapSolver account"

}

},

"onError": "continueRegularOutput"

},

{

"parameters": {

"conditions": {

"options": {

"version": 2,

"caseSensitive": true,

"leftValue": "",

"typeValidation": "strict"

},

"combinator": "and",

"conditions": [

{

"id": "condition-1",

"operator": {

"type": "string",

"operation": "isNotEmpty",

"singleValue": true

},

"leftValue": "={{ $json.error }}",

"rightValue": ""

}

]

},

"options": {}

},

"id": "ve-api-004",

"name": "Error?",

"type": "n8n-nodes-base.if",

"typeVersion": 2.2,

"position": [

688,

-80

]

},

{

"parameters": {

"respondWith": "json",

"responseBody": "={{ JSON.stringify($json.data) }}",

"options": {}

},

"id": "ve-api-005",

"name": "Respond Success",

"type": "n8n-nodes-base.respondToWebhook",

"typeVersion": 1.5,

"position": [

1008,

-240

]

},

{

"parameters": {

"respondWith": "json",

"responseBody": "={{ JSON.stringify({ error: $json.error }) }}",

"options": {

"responseCode": 400

}

},

"id": "ve-api-006",

"name": "Respond Error",

"type": "n8n-nodes-base.respondToWebhook",

"typeVersion": 1.5,

"position": [

1008,

-16

]

}

],

"connections": {

"Receive Vision Request": {

"main": [

[

{

"node": "Validate Input",

"type": "main",

"index": 0

}

]

]

},

"Validate Input": {

"main": [

[

{

"node": "Solve Vision Engine",

"type": "main",

"index": 0

}

]

]

},

"Solve Vision Engine": {

"main": [

[

{

"node": "Error?",

"type": "main",

"index": 0

}

]

]

},

"Error?": {

"main": [

[

{

"node": "Respond Error",

"type": "main",

"index": 0

}

],

[

{

"node": "Respond Success",

"type": "main",

"index": 0

}

]

]

}

},

"pinData": {},

"meta": {

"instanceId": "962ff0267b713be0344b866fa54daae28de8ed2144e2e6867da355dae193ea1f"

}

}Workflow 2: Slider Puzzle Solver — Fetch & Solve

This workflow demonstrates a practical end-to-end slider puzzle solver. It fetches the puzzle piece and background images from URLs, converts them to base64, sends them to Vision Engine with the slider_1 module, and returns the pixel distance needed to complete the slider.

This is the pattern you would use when integrating slider CAPTCHA solving into a larger automation — the returned distance tells your browser automation (Puppeteer, Playwright, Selenium) exactly how far to drag the slider handle.

Node Flow

Schedule Trigger (every 1 hour) ─┐

├→ Set Puzzle Config → Fetch Puzzle Image → Fetch Background Image

Webhook Trigger (POST) ──────────┘ → Convert Images to Base64 → Solve Slider Puzzle

→ Slider Error? → Format Solution → Return Result

→ Format Error → Return ErrorHow It Works

1. Dual Triggers

- Schedule Trigger: runs every hour for automated testing or recurring puzzle-solving

- Webhook Trigger: on-demand activation from another workflow or external service

2. Set Puzzle Config

Defines the URLs for the puzzle piece image and background image, plus any optional websiteURL for improved accuracy. In a real integration, these URLs would come from the target site's CAPTCHA challenge response.

3. Fetch Puzzle Image + Fetch Background Image

Two HTTP Request nodes download the puzzle piece and background images as binary data.

4. Convert Images to Base64

A Code node converts both binary images to raw base64 strings, stripping any data:image/...;base64, prefix.

5. Solve Slider Puzzle

The CapSolver node with:

- Resource:

Recognition - Operation:

Vision Engine - module:

slider_1 - image: base64 puzzle piece

- imageBackground: base64 background

Returns the distance in pixels instantly.

6. Check Result and Respond

The IF node checks for errors. On success, the solution is formatted and returned. On error, the error message is returned.

Expected Response

Success:

json

{

"success": true,

"module": "slider_1",

"distance": 142,

"unit": "pixels",

"rawSolution": {

"distance": 142

},

"solvedAt": "2026-03-16T10:00:00.000Z"

}Error:

json

{

"success": false,

"error": "ERROR_INVALID_IMAGE",

"solvedAt": "2026-03-16T10:00:00.000Z"

}Import This Workflow

Click to expand workflow JSON

json

{

"nodes": [

{

"parameters": {

"content": "## Slider Puzzle Solver \u2014 Fetch & Solve \u2014 Vision Engine\n\n### How it works\n\n1. Triggers every hour or via webhook to start.\n2. Sets puzzle configuration with necessary URLs.\n3. Fetches puzzle and background images.\n4. Converts the images to Base64 format for processing.\n5. Solves the slider puzzle and checks for errors.\n6. Formats and returns results or errors via webhook.\n\n### Setup steps\n\n- [ ] Configure and enable schedule trigger or webhook.\n- [ ] Set up proper URLs for puzzle and background images.\n- [ ] Configure Solve Slider node with appropriate API keys or settings.\n\n### Customization\n\nAdjust the puzzle image URL and background image URL for different puzzles.",

"height": 672,

"width": 480

},

"type": "n8n-nodes-base.stickyNote",

"typeVersion": 1,

"position": [

-1184,

-368

],

"id": "acf16f0f-c0e6-4ad8-91ea-5a3dff2be080",

"name": "Sticky Note"

},

{

"parameters": {

"content": "## Initiate workflow\n\nStarts the workflow either periodically or via an external request.",

"height": 672,

"color": 7

},

"type": "n8n-nodes-base.stickyNote",

"typeVersion": 1,

"position": [

-624,

-368

],

"id": "3de3100b-ee70-409f-8ce5-6b7052e7e177",

"name": "Sticky Note1"

},

{

"parameters": {

"content": "## Configure puzzle settings\n\nSets up the URLs for images required for the puzzle solution.",

"height": 352,

"color": 7

},

"type": "n8n-nodes-base.stickyNote",

"typeVersion": 1,

"position": [

-80,

-192

],

"id": "7d44115c-6e2b-4f92-a077-a6c275561580",

"name": "Sticky Note2"

},

{

"parameters": {

"content": "## Fetch images\n\nDownloads the puzzle and background images based on configured settings.",

"height": 304,

"width": 528,

"color": 7

},

"type": "n8n-nodes-base.stickyNote",

"typeVersion": 1,

"position": [

192,

-128

],

"id": "a06f0443-9cd5-483e-a8d7-12e1294e52e4",

"name": "Sticky Note3"

},

{

"parameters": {

"content": "## Process images\n\nConverts fetched images to Base64 for further processing.",

"height": 320,

"color": 7

},

"type": "n8n-nodes-base.stickyNote",

"typeVersion": 1,

"position": [

768,

-112

],

"id": "cfb5588a-4447-4274-a972-3d4eaaa89d52",

"name": "Sticky Note4"

},

{

"parameters": {

"content": "## Solve puzzle\n\nDelegates puzzle solving and error checking to specialized components.",

"height": 304,

"width": 528,

"color": 7

},

"type": "n8n-nodes-base.stickyNote",

"typeVersion": 1,

"position": [

1136,

-80

],

"id": "7ebc0c4e-5b29-4a5f-b754-89bcca4672f4",

"name": "Sticky Note5"

},

{

"parameters": {

"content": "## Format and return results\n\nFormats the solution or error and sends the response back via webhook.",

"height": 544,

"width": 512,

"color": 7

},

"type": "n8n-nodes-base.stickyNote",

"typeVersion": 1,

"position": [

1792,

-288

],

"id": "86a92570-1529-4ca9-963c-b1d0792222ee",

"name": "Sticky Note6"

},

{

"parameters": {

"rule": {

"interval": [

{

"field": "hours"

}

]

}

},

"id": "ve-slider-201",

"name": "Every 1 Hour",

"type": "n8n-nodes-base.scheduleTrigger",

"typeVersion": 1.2,

"position": [

-576,

-208

]

},

{

"parameters": {

"httpMethod": "POST",

"path": "slider-puzzle-solver",

"responseMode": "responseNode",

"options": {}

},

"id": "ve-slider-202",

"name": "Webhook Trigger",

"type": "n8n-nodes-base.webhook",

"typeVersion": 2,

"position": [

-560,

144

],

"webhookId": "67cb24f0-257f-4a60-8820-d57595d5bb3a"

},

{

"parameters": {

"assignments": {

"assignments": [

{

"id": "puzzleImageURL",

"name": "puzzleImageURL",

"value": "={{ $json.body?.puzzleImageURL || $json.puzzleImageURL || '' }}",

"type": "string"

},

{

"id": "backgroundImageURL",

"name": "backgroundImageURL",

"value": "={{ $json.body?.backgroundImageURL || $json.backgroundImageURL || '' }}",

"type": "string"

},

{

"id": "websiteURL",

"name": "websiteURL",

"value": "={{ $json.body?.websiteURL || $json.websiteURL || '' }}",

"type": "string"

}

]

},

"options": {}

},

"id": "ve-slider-203",

"name": "Set Puzzle Config",

"type": "n8n-nodes-base.set",

"typeVersion": 3.4,

"position": [

-32,

0

]

},

{

"parameters": {

"url": "={{ $json.puzzleImageURL }}",

"options": {

"response": {

"response": {

"responseFormat": "file"

}

}

}

},

"id": "ve-slider-204",

"name": "Fetch Puzzle Image",

"type": "n8n-nodes-base.httpRequest",

"typeVersion": 4.2,

"position": [

240,

0

]

},

{

"parameters": {

"url": "={{ $('Set Puzzle Config').first().json.backgroundImageURL }}",

"options": {

"response": {

"response": {

"responseFormat": "file"

}

}

}

},

"id": "ve-slider-205",

"name": "Fetch Background Image",

"type": "n8n-nodes-base.httpRequest",

"typeVersion": 4.2,

"position": [

576,

0

]

},

{

"parameters": {

"jsCode": "const puzzleBinary = $input.first().binary;\nconst config = $('Set Puzzle Config').first().json;\nif (!puzzleBinary || !puzzleBinary.data) {\n return [{ json: { error: 'Failed to fetch puzzle image' } }];\n}\nconst puzzleBuffer = await this.helpers.getBinaryDataBuffer(0, 'data');\nconst puzzleBase64 = puzzleBuffer.toString('base64');\nlet backgroundBase64 = '';\ntry {\n const bgBinary = $input.first().binary;\n if (bgBinary && bgBinary.data) {\n const bgBuffer = await this.helpers.getBinaryDataBuffer(0, 'data');\n backgroundBase64 = bgBuffer.toString('base64');\n }\n} catch (e) {}\nreturn [{ json: { image: puzzleBase64, imageBackground: backgroundBase64, websiteURL: config.websiteURL || '', module: 'slider_1' } }];"

},

"id": "ve-slider-206",

"name": "Convert to Base64",

"type": "n8n-nodes-base.code",

"typeVersion": 2,

"position": [

816,

48

]

},

{

"parameters": {

"resource": "Recognition",

"operation": "Vision Engine",

"websiteURL": "={{ $json.websiteURL }}",

"module": "={{ $json.module }}",

"image": "={{ $json.image }}",

"imageBackground": "={{ $json.imageBackground }}",

"optional": {}

},

"id": "ve-slider-207",

"name": "Solve Slider",

"type": "n8n-nodes-capsolver.capSolver",

"typeVersion": 1,

"position": [

1184,

48

],

"credentials": {

"capSolverApi": {

"id": "BeBFMAsySMsMGeE9",

"name": "CapSolver account"

}

},

"onError": "continueRegularOutput"

},

{

"parameters": {

"conditions": {

"options": {

"version": 2,

"leftValue": "",

"caseSensitive": true,

"typeValidation": "strict"

},

"combinator": "and",

"conditions": [

{

"id": "slider-err-check",

"operator": {

"type": "string",

"operation": "isNotEmpty",

"singleValue": true

},

"leftValue": "={{ $json.error }}",

"rightValue": ""

}

]

},

"options": {}

},

"id": "ve-slider-208",

"name": "Slider Error?",

"type": "n8n-nodes-base.if",

"typeVersion": 2.2,

"position": [

1520,

48

]

},

{

"parameters": {

"jsCode": "const solution = $input.first().json.data?.solution || $input.first().json.data || {};\nconst distance = solution.distance || solution.slide_distance || null;\nreturn [{ json: { success: true, module: 'slider_1', distance: distance, unit: 'pixels', rawSolution: solution, solvedAt: new Date().toISOString() } }];"

},

"id": "ve-slider-209",

"name": "Format Solution",

"type": "n8n-nodes-base.code",

"typeVersion": 2,

"position": [

1840,

-160

]

},

{

"parameters": {

"jsCode": "return [{ json: { success: false, error: $input.first().json.error || 'Unknown Vision Engine error', solvedAt: new Date().toISOString() } }];"

},

"id": "ve-slider-210",

"name": "Format Error",

"type": "n8n-nodes-base.code",

"typeVersion": 2,

"position": [

1840,

80

]

},

{

"parameters": {

"respondWith": "json",

"responseBody": "={{ $json }}",

"options": {}

},

"id": "ve-slider-211",

"name": "Return Result",

"type": "n8n-nodes-base.respondToWebhook",

"typeVersion": 1.1,

"position": [

2160,

-160

]

},

{

"parameters": {

"respondWith": "json",

"responseBody": "={{ $json }}",

"options": {

"responseCode": 400

}

},

"id": "ve-slider-212",

"name": "Return Error",

"type": "n8n-nodes-base.respondToWebhook",

"typeVersion": 1.1,

"position": [

2160,

80

]

}

],

"connections": {

"Every 1 Hour": {

"main": [

[

{

"node": "Set Puzzle Config",

"type": "main",

"index": 0

}

]

]

},

"Webhook Trigger": {

"main": [

[

{

"node": "Set Puzzle Config",

"type": "main",

"index": 0

}

]

]

},

"Set Puzzle Config": {

"main": [

[

{

"node": "Fetch Puzzle Image",

"type": "main",

"index": 0

}

]

]

},

"Fetch Puzzle Image": {

"main": [

[

{

"node": "Fetch Background Image",

"type": "main",

"index": 0

}

]

]

},

"Fetch Background Image": {

"main": [

[

{

"node": "Convert to Base64",

"type": "main",

"index": 0

}

]

]

},

"Convert to Base64": {

"main": [

[

{

"node": "Solve Slider",

"type": "main",

"index": 0

}

]

]

},

"Solve Slider": {

"main": [

[

{

"node": "Slider Error?",

"type": "main",

"index": 0

}

]

]

},

"Slider Error?": {

"main": [

[

{

"node": "Format Error",

"type": "main",

"index": 0

}

],

[

{

"node": "Format Solution",

"type": "main",

"index": 0

}

]

]

},

"Format Solution": {

"main": [

[

{

"node": "Return Result",

"type": "main",

"index": 0

}

]

]

},

"Format Error": {

"main": [

[

{

"node": "Return Error",

"type": "main",

"index": 0

}

]

]

}

},

"pinData": {},

"meta": {

"instanceId": "962ff0267b713be0344b866fa54daae28de8ed2144e2e6867da355dae193ea1f"

}

}Test It

Testing the Solver API

Once you have configured the CapSolver credential and activated the workflow, test the Solver API:

Slider puzzle:

bash

curl -X POST https://your-n8n-instance.com/webhook/vision-engine-solver \

-H "Content-Type: application/json" \

-d '{

"module": "slider_1",

"image": "BASE64_PUZZLE_PIECE_HERE",

"imageBackground": "BASE64_BACKGROUND_HERE"

}'Rotation puzzle:

bash

curl -X POST https://your-n8n-instance.com/webhook/vision-engine-solver \

-H "Content-Type: application/json" \

-d '{

"module": "rotate_1",

"image": "BASE64_IMAGE_TO_ROTATE"

}'Object selection (shein):

bash

curl -X POST https://your-n8n-instance.com/webhook/vision-engine-solver \

-H "Content-Type: application/json" \

-d '{

"module": "shein",

"image": "BASE64_IMAGE",

"question": "Select all shoes"

}'GIF OCR:

bash

curl -X POST https://your-n8n-instance.com/webhook/vision-engine-solver \

-H "Content-Type: application/json" \

-d '{

"module": "ocr_gif",

"image": "BASE64_GIF_DATA"

}'Testing the Slider Puzzle Solver

bash

curl -X POST https://your-n8n-instance.com/webhook/slider-puzzle-solver \

-H "Content-Type: application/json" \

-d '{

"puzzleImageURL": "https://example.com/captcha/puzzle-piece.png",

"backgroundImageURL": "https://example.com/captcha/background.png",

"websiteURL": "https://example.com"

}'A response with a numeric distance value confirms the full pipeline worked — images fetched, converted to base64, Vision Engine solved the slider, and the pixel distance was returned.

Understanding the Response

Vision Engine returns different solution shapes depending on the module:

slider_1

json

{

"solution": {

"distance": 142

}

}The distance is in pixels — this is how far the slider handle must be dragged to the right to complete the puzzle.

rotate_1 / rotate_2

json

{

"solution": {

"angle": 73

}

}The angle is in degrees — this is how much the image must be rotated (clockwise) to reach the correct orientation.

shein

json

{

"solution": {

"rects": [

{ "x1": 45, "y1": 120, "x2": 180, "y2": 250 },

{ "x1": 300, "y1": 90, "x2": 420, "y2": 210 }

]

}

}Each rect in the array is a bounding box (top-left and bottom-right coordinates) for a matching area in the image.

ocr_gif

json

{

"solution": {

"text": "x7Km9"

}

}The text is the recognized string from the animated GIF.

Adapting for Other Module Types

The Solver API workflow already supports all five modules via the request body. To build a dedicated workflow for a different module — say, rotate_1 for rotation puzzles — the changes are minimal:

- Config node: Replace

puzzleImageURL/backgroundImageURLwith just the rotation image URL - Fetch nodes: Only one HTTP Request needed (no background image for

rotate_1) - CapSolver node: Change

moduletorotate_1 - Format Solution: Extract

angleinstead ofdistance

For shein, you would also add the question parameter to the config and pass it through to the CapSolver node.

Troubleshooting

"ERROR_INVALID_IMAGE"

The base64 string is malformed or empty. Check that:

- The image was fetched successfully (HTTP 200)

- The binary-to-base64 conversion produced a non-empty string

- The

data:image/...;base64,prefix was stripped - The base64 string has no newlines or spaces

"ERROR_INVALID_MODULE"

The module value does not match any supported model. Use exactly one of: slider_1, rotate_1, rotate_2, shein, ocr_gif.

Distance Returns 0 or Null

The images may not be a valid slider puzzle pair. Check that:

imageis the puzzle piece (the small draggable fragment)imageBackgroundis the full background with the missing slot visible- Both images are from the same challenge instance

- The images are not corrupted or too small

CapSolver Node Shows No "Vision Engine" Option

Make sure you have n8n-nodes-capsolver version 1.x or later installed. The Vision Engine operation was added in recent versions. Update the community node if needed:

- Go to Settings > Community Nodes

- Find

n8n-nodes-capsolver - Update to the latest version

- Restart n8n

Webhook Returns 404

The workflow must be active for the webhook to be live. Import the workflow, configure credentials, then toggle the workflow to active in n8n.

Best Practices

-

Use raw base64 strings — always strip the

data:image/...;base64,prefix before passing images to the CapSolver node. -

Match images to modules —

slider_1needs bothimageandimageBackground.rotate_1needs onlyimage.sheinneedsimageplusquestion. Using the wrong combination will fail or return incorrect results. -

Fetch images fresh — visual puzzle images are typically single-use and expire quickly. Fetch them as close to solve time as possible.

-

Vision Engine is instant — unlike Token operations that poll for results, Recognition operations return immediately. Your workflow does not need retry logic or polling delays.

-

No proxy needed — Vision Engine analyzes images server-side. There is no browser interaction with a target site, so no proxy is required.

-

Validate before solving — check that the image data is present and the module name is valid before calling the CapSolver node. This avoids wasting API credits on requests that will fail.

-

Use

websiteURLwhen available — while optional, providing the source page URL can improve accuracy for some puzzle types. -

Handle module-specific responses — different modules return different fields (

distance,angle,rects,text). Your downstream logic should check which module was used and extract the correct field.

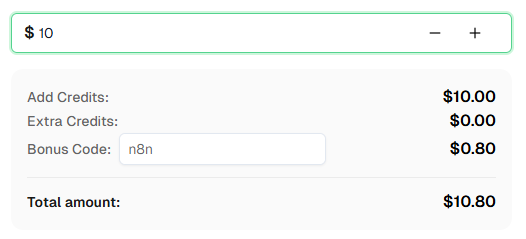

Ready to get started? Sign up for CapSolver and use bonus code n8n for an extra 8% bonus on your first recharge!

Conclusion

Vision Engine fills a different gap than CapSolver's Token operations. Where reCAPTCHA, Turnstile, and Cloudflare Challenge solving returns tokens that you submit to bypass a gate, Vision Engine returns measurements that your automation uses to interact with visual puzzles — dragging sliders, rotating images, selecting objects, or reading animated text.

The key differences to remember:

- Recognition resource, not Token — instant results, no polling

- Base64 images in, measurements out — pixels, degrees, coordinates, or text

- No proxy needed — the AI analyzes images server-side

- Five modules — each designed for a specific visual puzzle type

The two workflows in this article cover the two most common integration patterns:

- Solver API — a generic webhook endpoint that accepts any module and returns the solution

- Slider Puzzle Solver — a complete fetch-convert-solve pipeline for slider CAPTCHAs

Both import as inactive. Configure your CapSolver credential, replace the placeholder values, activate the workflow, and test.

Frequently Asked Questions

How is Vision Engine different from Image To Text (OCR)?

Image To Text recognizes characters in a static image — standard OCR. Vision Engine goes further: it understands spatial relationships in visual puzzles. It can calculate slider distances, rotation angles, object bounding boxes, and even read text from animated GIFs. They are both Recognition operations (instant result, no polling), but they solve different types of problems.

Do I need a proxy for Vision Engine?

No. Vision Engine analyzes the images you provide server-side. There is no browser session, no cookie, and no interaction with a target website. Proxies are not needed and the CapSolver node does not accept a proxy parameter for Vision Engine tasks.

Can I solve multiple puzzles in one workflow execution?

Yes. The CapSolver node processes one item at a time, but n8n's item-based execution means you can pass multiple items through the node. Each item gets its own Recognition call and returns its own solution. Use a Split In Batches node or feed multiple items from a Code node.

What image formats are supported?

The image and imageBackground fields accept base64-encoded JPEG, PNG, GIF, and WebP. The base64 string must be raw — no data:image/...;base64, prefix, no newlines.

How do I get the puzzle images from a real site?

In a real slider CAPTCHA integration, the target site serves the puzzle images as part of the challenge response. Typically you would:

- Load the page (via HTTP Request or browser automation)

- Extract the image URLs from the CAPTCHA widget's DOM or network requests

- Fetch the images

- Convert to base64

- Send to Vision Engine

The Slider Puzzle Solver workflow demonstrates steps 3-5. Steps 1-2 depend on the specific target site.

What does the question parameter do?

The question parameter is only used by the shein module. It tells the AI what to look for in the image — for example, "Select all shoes" or "Tap the matching items." For all other modules, leave it empty.

Can I use Vision Engine for hCaptcha image challenges?

Vision Engine's modules (slider_1, rotate_1, rotate_2, shein, ocr_gif) are designed for specific visual puzzle types. hCaptcha image classification challenges use a different approach. Check the CapSolver documentation for hCaptcha-specific solutions.

How fast is Vision Engine?

Vision Engine is a Recognition operation, which means the result comes back in a single API call — typically under 2 seconds. There is no polling loop, no getTaskResult calls, and no timeout waiting. This makes it significantly faster than Token operations, which can take 10-30 seconds to complete.

What happens if the image is too small or too large?

Very small images may not contain enough detail for accurate analysis. Very large images will increase the base64 payload size and may slow down the request. For best results, use the original resolution provided by the CAPTCHA challenge — do not resize the images.

Can I chain Vision Engine with browser automation?

Yes, and that is the intended use case for most real-world applications. The typical flow is:

- Browser automation (Puppeteer/Playwright via n8n) loads the page

- The CAPTCHA challenge appears with puzzle images

- Your workflow extracts the image URLs and fetches them

- Vision Engine returns the solution (distance, angle, etc.)

- Browser automation uses the solution to complete the challenge (drag slider, rotate image, click coordinates)

The Vision Engine workflow handles step 3-4. Steps 1-2 and 5 are handled by your browser automation nodes.

More

n8nApr 29, 2026

Monitor AWS WAF-Protected Product Prices in n8n with CapSolver

Learn how to use the CapSolver n8n template to monitor AWS WAF-protected product pages, solve challenges, extract prices, compare changes, and trigger alerts automatically.

n8nApr 03, 2026

How to Build Scrapers for Web Scraping in n8n with CapSolver

Learn how to build web scrapers in n8n for captcha-protected sites using CapSolver. This step-by-step guide covers solving reCAPTCHA, submitting tokens correctly, extracting product data, and automating workflows with schedule and webhook triggers.