How to Handle Web Scraping Blocks: Practical Methods That Work

Ethan Collins

Pattern Recognition Specialist

TL;Dr:

- Understand the Mechanism: Websites use IP tracking, browser fingerprinting, and behavioral analysis to identify and block automated scripts.

- Implement Rotation: Use rotating residential proxies and diverse User-Agent strings to mimic human-like traffic patterns.

- Handle Challenges: Integrate specialized tools to resolve CAPTCHAs and manage complex bot detection systems efficiently.

- Stay Ethical: Always follow robots.txt guidelines and implement request throttling to maintain a low impact on target servers.

Introduction

Web scraping has become an essential component of modern data-driven decision-making, yet the landscape of automated data collection is increasingly challenging. As websites deploy more sophisticated security measures, learning how to handle web scraping blocks is no longer just an advantage—it is a necessity for any successful extraction project. This guide provides a comprehensive overview of why blocks occur, the underlying technology behind detection mechanisms, and the most effective, ethical strategies to ensure your scrapers remain operational. Whether you are a developer building a custom crawler or a data analyst overseeing a large-scale operation, understanding these practical methods will help you maintain consistent access to the information you need.

Understanding the Nature of Web Scraping Blocks

To effectively manage obstacles, one must first understand what they are and why they exist. A web scraping block is a defensive measure implemented by a website to prevent automated scripts from accessing its content. These measures are often part of a broader security strategy designed to protect server resources, prevent intellectual property theft, or maintain the integrity of user data.

According to recent industry data, automated traffic accounts for a significant portion of all web requests, leading many platforms to adopt aggressive filtering. You can find more details on global trends in the Statista Bot Traffic Data report. When a server detects patterns that deviate from standard human behavior, it may respond by serving a CAPTCHA, slowing down the connection, or issuing a complete IP ban. Learning how to handle web scraping blocks in these scenarios is essential for data persistence.

Technical Background: How Detection Mechanisms Work

Modern security systems do not rely on a single factor to identify bots. Instead, they use a combination of techniques to build a risk profile for every incoming request.

1. IP-Based Tracking

This is the most fundamental layer of defense. Servers monitor the number of requests coming from a single IP address over a specific timeframe. If the frequency exceeds a predefined threshold, the IP is flagged. This is why knowing how to handle web scraping blocks at the network level is so important. Data centers are often pre-emptively blocked because they are rarely used by legitimate human visitors.

2. Browser Fingerprinting

Beyond the IP address, websites can collect a vast array of information from your browser environment. This includes your screen resolution, installed fonts, time zone, and hardware specifications. If these details appear inconsistent or too "clean" (typical of headless browsers), the system identifies the request as automated.

3. Behavioral Analysis

Sophisticated platforms track how a user interacts with the page. Humans move their mouse in non-linear patterns, take time to read content, and click elements with varying rhythms. In contrast, a script might jump directly to a URL and extract data in milliseconds. Any deviation from expected human behavior triggers a red flag. This behavior-based detection is one of the most difficult challenges when figuring out how to handle web scraping blocks.

Common Types of CAPTCHA Challenges

When a system is uncertain but suspicious, it will often present a CAPTCHA. Understanding these types is crucial for knowing how to handle web scraping blocks effectively.

| CAPTCHA Type | Description | Primary Detection Logic |

|---|---|---|

| Image Recognition | Users must select specific objects (e.g., traffic lights) from a grid. | Tests the ability to process visual data and identifies human-like clicking patterns. |

| Invisible CAPTCHA | Runs in the background without user interaction. | Analyzes browser environment and historical behavior to assign a risk score. |

| Text/Math Challenges | Requires solving a simple equation or typing distorted text. | Relies on the difficulty of OCR (Optical Character Recognition) for older bots. |

| Puzzle/Slider | Users must drag a piece to complete an image. | Focuses on the physical movement of the cursor and the timing of the action. |

Practical Methods to Handle Web Scraping Blocks

Implementing the right technical strategies can significantly reduce the likelihood of being detected. Here are the most effective methods used by professionals today.

Use Rotating Residential Proxies

Since IP bans are common, Using a pool of residential proxies is one of the best ways to avoid IP bans and ensure high success rates. These proxies are a cornerstone of web scraping best practices. Unlike data center IPs, residential IPs are associated with real home internet connections, making them much harder to distinguish from legitimate users. By rotating these IPs for every few requests, you can distribute your traffic and stay under the radar.

Manage Request Headers and User-Agents

Every HTTP request includes headers that tell the server about the client. A common mistake is using a default library header like "python-requests/2.25.1". Instead, you should use a diverse set of real User-Agent strings. Refer to the MDN User-Agent Documentation to understand how to structure these correctly. Ensure your headers include fields like "Accept-Language" and "Referer" to mimic a real browsing session.

Implement Request Throttling

Speed is often the biggest giveaway for a bot. By adding random delays between your requests, you can simulate human browsing behavior. This technique, known as throttling, prevents you from overwhelming the target server and reduces the chances of triggering rate-limiting alarms. Implementing these web scraping best practices will help you maintain access to sensitive data while also helping you avoid IP bans during large-scale operations.

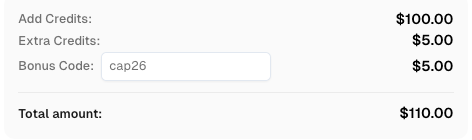

Use code

CAP26when signing up at CapSolver to receive bonus credits!

Solve CAPTCHAs Automatically

Even with perfect headers and proxies, you will eventually encounter a challenge. This is where specialized services become invaluable. For instance, CapSolver provides a robust API to resolve various types of challenges, such as ReCaptcha and FriendlyCaptcha, ensuring your automated workflows remain uninterrupted. These tools are a core part of how to handle web scraping blocks in modern environments.

If you are using tools like cURL or Python for your automation, you can integrate a solution following this general workflow:

- Submit the Task: Send the CAPTCHA details (site key, URL) to the service.

- Retrieve the Solution: Poll the API using the Task ID until the solution is ready.

- Submit the Token: Use the returned token to bypass the challenge on the target site.

Here is a simplified example based on the CapSolver documentation for submitting a task:

json

{

"clientKey": "YOUR_API_KEY",

"task": {

"type": "ReCaptchaV2TaskProxyLess",

"websiteURL": "https://www.example.com",

"websiteKey": "6LcR_okUAAAAAPYrPe-z_bx1oYxq6zz_S0vO49zV"

}

}Comparison Summary: Scraping Techniques

To help you choose the right approach, here is a comparison of common methods.

| Method | Effectiveness | Implementation Complexity | Cost |

|---|---|---|---|

| Basic Headers | Low | Low | Free |

| Data Center Proxies | Medium | Medium | Low |

| Residential Proxies | High | Medium | Moderate |

| Headless Browsers | High | High | High (Resources) |

| CAPTCHA Solvers | Essential | Low | Low |

Ethical and Compliance Considerations

When learning how to handle web scraping blocks, it is vital to emphasize ethical practices. Automated data collection should always be conducted in a way that respects the target website's terms and server health. Always check the robots.txt file of a domain to see which areas are restricted. Following these web scraping best practices not only protects you legally but also ensures the longevity of your data sources.

For those looking for more advanced tools, exploring the best data extraction tools can provide additional insights into building resilient systems.

Natural Transition to Solutions

As bot detection technology evolves, the complexity of maintaining a scraper grows. Many developers find that why web automation keeps failing on captcha is often due to the lack of a dedicated handling strategy. Utilizing a best captcha solver allows you to focus on data analysis rather than constantly fixing broken scripts. By integrating these professional services into your stack, you can ensure high success rates even on the most protected platforms.

Conclusion

Mastering how to handle web scraping blocks requires a multi-layered approach that combines technical precision with ethical responsibility. By understanding detection logic, implementing robust proxy management, and utilizing specialized solving services, you can build reliable data pipelines. Remember that the goal is not just to bypass a single barrier, but to create a sustainable system that respects the digital ecosystem while delivering the insights your business depends on.

FAQ

1. Why am I still getting blocked even with proxies?

Blocks can occur due to browser fingerprinting or inconsistent headers. Ensure your User-Agent matches the proxy's perceived location and that you are not leaking your real IP through WebRTC.

2. Is it legal to bypass web scraping blocks?

The legality depends on your jurisdiction and the type of data you are collecting. Generally, scraping publicly available data is legal, but you must respect copyright and personal data protection laws.

3. How often should I rotate my User-Agent?

It is best to use a new User-Agent for every new session or every few hundred requests, especially if you are also rotating your IP address.

4. Can headless browsers prevent all blocks?

While helpful, headless browsers like Puppeteer or Playwright can still be detected via specific properties. You must use "stealth" plugins to mask their automated nature.

5. What is the most cost-effective way to handle CAPTCHAs?

Using an API-based solving service like CapSolver is typically more cost-effective than building your own ML models or using manual labor, as it offers high speed and accuracy at a low per-task cost.

More

The Other CAPTCHAApr 14, 2026

Can AI Solve CAPTCHA? How Detection and Solve Really Work

Explore how AI detects and solves CAPTCHA challenges, from image recognition to behavioral analysis. Understand the technology behind AI CAPTCHA solvers and how CapSolver aids automated workflows. Learn about the evolving battle between AI and human verification.

The Other CAPTCHAApr 09, 2026

CAPTCHA Solving API Performance Comparison: Speed, Accuracy & Cost (2026)

Compare top CAPTCHA solving APIs by speed, accuracy, uptime, and pricing. See how CapSolver, 2Captcha, CapMonster Cloud, and others stack up in our detailed performance comparison.