How to Use geziyor for Web Scraping

Aloísio Vítor

Image Processing Expert

Geziyor: A Powerful Web Scraping Framework for Go

Geziyor is a modern web scraping framework for Go, designed to offer powerful tools for scraping websites and extracting data efficiently. Unlike many traditional scraping libraries, Geziyor emphasizes ease of use while providing highly customizable scraping workflows.

Key Features:

- Concurrency Support: It supports asynchronous operations, allowing you to scrape multiple pages concurrently, which boosts performance.

- Request Customization: Easily modify HTTP requests, including headers, cookies, and custom parameters.

- Automatic Throttling: Helps avoid triggering anti-scraping mechanisms by pacing the requests to servers.

- Built-in Caching and Persistence: It supports caching scraped data and responses to avoid redundant requests.

- Extensibility: Offers hooks to extend functionality or handle events like request/response interception, custom middlewares, and more.

- Supports Proxies: Easily integrate proxies for rotating IPs or bypassing restrictions.

Prerequisites

To use Geziyor, ensure you have:

- Go 1.12+ installed from the official Go website.

- Basic knowledge of Go language.

Installation

To install Geziyor, you can run:

bash

go get -u github.com/geziyor/geziyorBasic Example: Web Scraping with Geziyor

Here is a simple example to scrape a website and print the titles of the articles:

go

package main

import (

"github.com/geziyor/geziyor"

"github.com/geziyor/geziyor/client"

"github.com/PuerkitoBio/goquery"

"log"

)

func main() {

geziyor.NewGeziyor(&geziyor.Options{

StartURLs: []string{"https://news.ycombinator.com"},

ParseFunc: func(g *geziyor.Geziyor, r *client.Response) {

r.HTMLDoc.Find(".storylink").Each(func(i int, s *goquery.Selection) {

log.Println(s.Text())

})

},

}).Start()

}Advanced Example: Scraping with Custom Headers and POST Requests

Sometimes, you need to simulate a more complex interaction with the server, like logging in or interacting with dynamic websites. In this example, we will show how to send a custom header and a POST request.

go

package main

import (

"github.com/geziyor/geziyor"

"github.com/geziyor/geziyor/client"

"log"

)

func main() {

geziyor.NewGeziyor(&geziyor.Options{

StartRequestsFunc: func(g *geziyor.Geziyor) {

g.Do(&client.Request{

Method: "POST",

URL: "https://httpbin.org/post",

Body: []byte(`{"username": "test", "password": "123"}`),

Headers: map[string]string{

"Content-Type": "application/json",

},

})

},

ParseFunc: func(g *geziyor.Geziyor, r *client.Response) {

log.Println(string(r.Body))

},

}).Start()

}Handling Cookies and Sessions in Geziyor

You might need to manage cookies or maintain sessions during scraping. Geziyor simplifies cookie management by automatically handling cookies for each request, and you can also customize the cookie handling process if needed.

go

package main

import (

"github.com/geziyor/geziyor"

"github.com/geziyor/geziyor/client"

"log"

)

func main() {

geziyor.NewGeziyor(&geziyor.Options{

StartRequestsFunc: func(g *geziyor.Geziyor) {

g.Do(&client.Request{

URL: "https://httpbin.org/cookies/set?name=value",

})

},

ParseFunc: func(g *geziyor.Geziyor, r *client.Response) {

log.Println("Cookies:", r.Cookies())

},

}).Start()

}Using Proxies with Geziyor

To scrape a website while avoiding IP restrictions or blocks, you can route your requests through a proxy. Here's how to configure proxy support with Geziyor:

go

package main

import (

"github.com/geziyor/geziyor"

"github.com/geziyor/geziyor/client"

"log"

)

func main() {

geziyor.NewGeziyor(&geziyor.Options{

StartRequestsFunc: func(g *geziyor.Geziyor) {

g.Do(&client.Request{

URL: "https://httpbin.org/ip",

Proxy: "http://username:password@proxyserver:8080",

})

},

ParseFunc: func(g *geziyor.Geziyor, r *client.Response) {

log.Println(string(r.Body))

},

}).Start()

}Handling Captchas with Geziyor

While Geziyor doesn’t natively solve captchas, you can integrate it with a captcha-solving service such as CapSolver. Here's how you can use CapSolver to solve captchas in conjunction with Geziyor.

Example: Solving ReCaptcha V2 Using Geziyor and CapSolver

First, you need to integrate CapSolver and handle requests for captcha challenges.

go

package main

import (

"encoding/json"

"github.com/geziyor/geziyor"

"github.com/geziyor/geziyor/client"

"log"

"time"

)

const CAPSOLVER_KEY = "YourKey"

func createTask(url, key string) (string, error) {

payload := map[string]interface{}{

"clientKey": CAPSOLVER_KEY,

"task": map[string]interface{}{

"type": "ReCaptchaV2TaskProxyLess",

"websiteURL": url,

"websiteKey": key,

},

}

response, err := client.NewRequest().

Method("POST").

URL("https://api.capsolver.com/createTask").

JSON(payload).

Do()

if err != nil {

return "", err

}

var result map[string]interface{}

json.Unmarshal(response.Body, &result)

return result["taskId"].(string), nil

}

func getTaskResult(taskId string) (string, error) {

payload := map[string]interface{}{

"clientKey": CAPSOLVER_KEY,

"taskId": taskId,

}

for {

response, err := client.NewRequest().

Method("POST").

URL("https://api.capsolver.com/getTaskResult").

JSON(payload).

Do()

if err != nil {

return "", err

}

var result map[string]interface{}

json.Unmarshal(response.Body, &result)

if result["status"] == "ready" {

return result["solution"].(string), nil

}

time.Sleep(5 * time.Second)

}

}

func main() {

geziyor.NewGeziyor(&geziyor.Options{

StartRequestsFunc: func(g *geziyor.Geziyor) {

taskId, _ := createTask("https://example.com", "6LcR_okUAAAAAPYrPe-HK_0RULO1aZM15ENyM-Mf")

solution, _ := getTaskResult(taskId)

g.Do(&client.Request{

Method: "POST",

URL: "https://example.com/submit",

Body: []byte(`g-recaptcha-response=` + solution),

})

},

ParseFunc: func(g *geziyor.Geziyor, r *client.Response) {

log.Println("Captcha Solved:", string(r.Body))

},

}).Start()

}Performance Optimizations with Geziyor

Geziyor excels at handling high-volume scraping tasks, but performance can be further optimized by adjusting certain options:

- Concurrency: Increase

ConcurrentRequeststo allow multiple parallel requests. - Request Delay: Implement a delay between requests to avoid detection.

Example with concurrency and delay:

go

package main

import (

"github.com/geziyor/geziyor"

"github.com/geziyor/geziyor/client"

)

func main() {

geziyor.NewGeziyor(&geziyor.Options{

StartURLs: []string{"https://example.com"},

ParseFunc: func(g *geziyor.Geziyor, r *client.Response) {},

ConcurrentRequests: 10,

RequestDelay: 2,

}).Start()

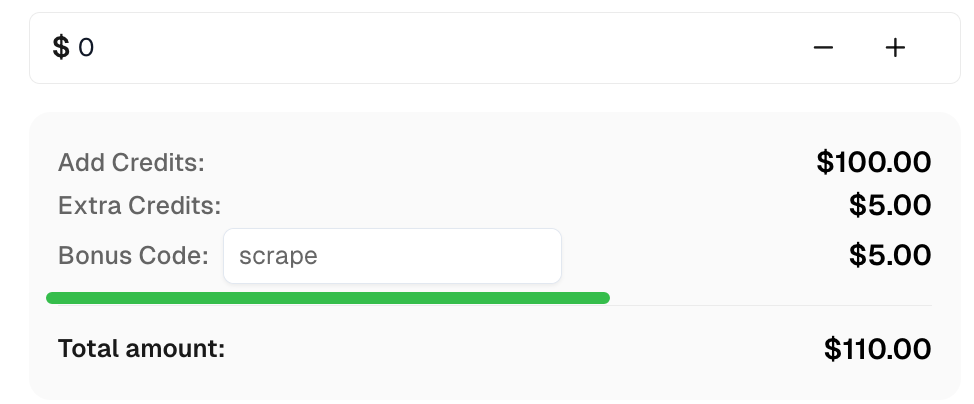

}Bonus Code

Claim your Bonus Code for top captcha solutions at CapSolver: scrape. After redeeming it, you will get an extra 5% bonus after each recharge, unlimited times.

Conclusion

Geziyor is a powerful, fast, and flexible web scraping framework for Go, making it a great choice for developers looking to build scalable scraping systems. Its built-in support for concurrency, customizable requests, and the ability to integrate with external services like CapSolver make it an ideal tool for both simple and advanced scraping tasks.

Whether you're collecting data from blogs, e-commerce sites, or building custom scraping pipelines, Geziyor has the features you need to get started quickly and efficiently.

More

About CapsolverApr 20, 2026

The Evolution of Automation Infrastructure: How CapSolver's Strategic Upgrade Empowers Data-Driven Businesses

CapSolver evolves into a core automation layer with improved UI, integrations, and enterprise-grade data capabilities.

AIApr 22, 2026

Best AI for Solving Image Puzzles: Top Tools and Strategies for 2026

Discover the best AI for solving image puzzles. Learn how CapSolver's Vision Engine and ImageToText APIs automate complex visual challenges with high accuracy.